AI Hallucinations Are Your Fault, Not AI's

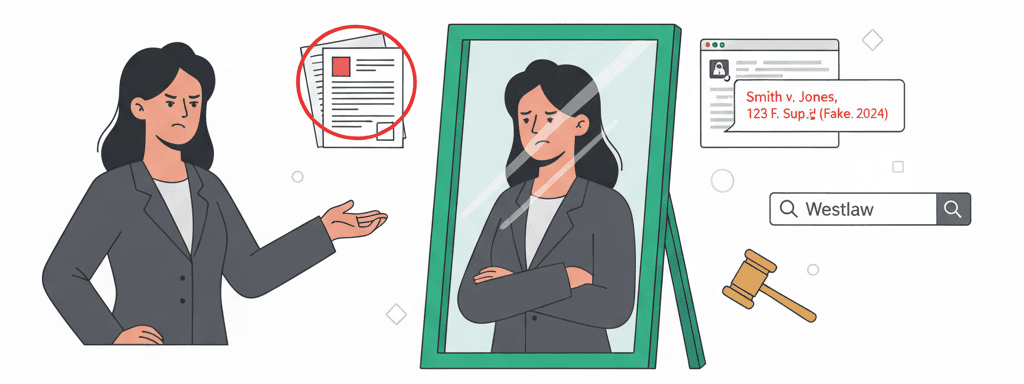

Stop whining about fake cases. You're the one who copy-pasted without checking. AI hallucinates because it's creative - that's not a bug, it's why it's useful. Here's how to use it without losing your license.

Stop whining about fake cases. You're the one who copy-pasted without checking.

The Uncomfortable Truth

AI hallucinates because it's creative. That's not a bug - it's literally why it's useful. The same system that can dream up a novel legal argument or spot patterns across 1000 documents is going to occasionally invent "Hatfield v. McCoy, 2021" when it needs a citation.

If AI couldn't hallucinate, it would be Google. Congratulations, you'd have a really expensive search bar.

Here's what actually happened to those sanctioned lawyers: They treated AI like a paralegal they could blame. They copy-pasted AI output into their briefs, walked into court, and acted shocked - SHOCKED! - when opposing counsel couldn't find their cases.

That's not an AI problem. That's a "you forgot how to be a lawyer" problem.

You Already Know How to Handle This

Every source you work with lies or gets things wrong:

- Clients lie about facts that hurt their case

- Witnesses misremember critical details

- Senior partners cite outdated law from memory

- Paralegals make typos that change meaning

- Even Westlaw has errors in their databases

You verify all of those. Why did your brain turn off when AI entered the picture?

Would you file a brief written by a first-year associate without reviewing it? Would you quote a client's version of events without investigation? Would you cite a case from memory without pulling it up?

No? Then why the hell are you doing it with AI?

The Real Problem (It's You)

Lawyers getting burned by hallucinations all make the same three mistakes:

1. They use AI like it's Lexis

"Find me cases about X" is the wrong prompt. AI isn't a database - it's a thinking partner. Ask it to analyze patterns, generate arguments, spot weaknesses. Not to be your citation machine.

2. They never learned to prompt properly

Garbage in, garbage out. If you say "write a motion to dismiss," you get generic trash with made-up cases. If you say "write a motion to dismiss based on these specific facts [paste facts] under Rule 12(b)(6), focusing on failure to state a claim, DO NOT cite specific cases - I'll add those myself" - you get something useful.

3. They're too lazy to spend 2 minutes verifying

This is the one that pisses me off. You went to law school. You passed the bar. You know how to Shepardize. But somehow copy-pasting a case name into Westlaw is too much work?

How to Use AI Without Losing Your License

The 2-Minute Verification Rule

This is the bare minimum. You should still do your normal verification process. But if you're not going to do anything else, at least do this:

For every AI-generated claim, citation, or fact:

- Paste the case name into your legal database (30 seconds)

- Confirm the holding matches AI's description (30 seconds)

- Check the pincite actually says what AI claims (1 minute)

That's it. Two minutes saves your reputation.

Better: Make AI Do the Work Differently

Stop asking for citations. Start asking for:

- Argument structures

- Fact patterns that might support your position

- Counterarguments to prepare for

- Questions to ask in deposition

- Themes for jury instructions

- Ways to distinguish unfavorable precedent

Then YOU add the citations from cases you actually pulled and read.

Even Better: Use Hallucinations as Features

Tell AI to make stuff up on purpose:

- "Imagine a case that would perfectly support my argument. What would the fact pattern look like?"

- "Create a hypothetical statute that would solve my client's problem"

- "What would the ideal expert witness say?"

Now you know what to look for. That imaginary perfect case? Search for something similar. That hypothetical statute? Check if any jurisdiction has tried it. That ideal expert testimony? Find someone who might actually say it.

The Verification Workflow That Actually Works

Step 1: Generate ideas with AI

"Give me 5 different legal theories that might support [specific situation]"

Step 2: Research real law yourself

Take those theories, search real databases, find real cases

Step 3: Feed real law back to AI

"Here are three actual cases [paste summaries]. How can I distinguish or use them?"

Step 4: Verify everything one more time

Before filing, every citation gets clicked and checked

Step 5: Take credit for good work

You did the legal research. AI just helped you think.

Stop Making Excuses

"But AI should be more accurate!" So should your clients. Deal with it.

"But it sounds so confident!" So does opposing counsel. You still verify their citations.

"But I trusted the technology!" You trust your car but you still watch the road.

"But everyone's using it wrong!" Good. Let them get sanctioned while you use it right.

The Bottom Line

AI hallucinations aren't going away. They're part of how large language models work. You can either:

- Whine about it and stay scared of using AI (while your competition speeds past you)

- Ignore it and get sanctioned (while your malpractice carrier drops you)

- Work with it like a professional who understands their tools

The lawyers winning with AI aren't the ones who found "hallucination-free" models (they don't exist). They're the ones who remember they're still lawyers. They verify. They think. They take responsibility.

Use AI for what it's good at: Creative thinking, pattern recognition, first drafts, devil's advocacy.

Use your brain for what it's good at: Judgment, verification, actual legal research, taking responsibility.

And for the love of all that's holy, stop copy-pasting anything from anywhere without reading it first. That's not an AI problem. That's a you problem.

Next time you're tempted to blame AI for a hallucination, ask yourself: Would I blame spell-check for not catching that I cited the wrong statute? No? Then stop blaming AI for your failure to do basic diligence.

Want more practical AI guidance?

Get actionable tips and strategies delivered weekly. No theory, just real-world implementation.